Building Mobile Apps with AI: From Concept to App Store

Building Mobile Apps with AI: From Concept to App Store

The apps that users love in 2026 have one thing in common: they feel intelligent. They remember your preferences. They suggest what you need before you ask. They understand your voice, recognize objects in photos, and surface personalized content that feels uncannily relevant.

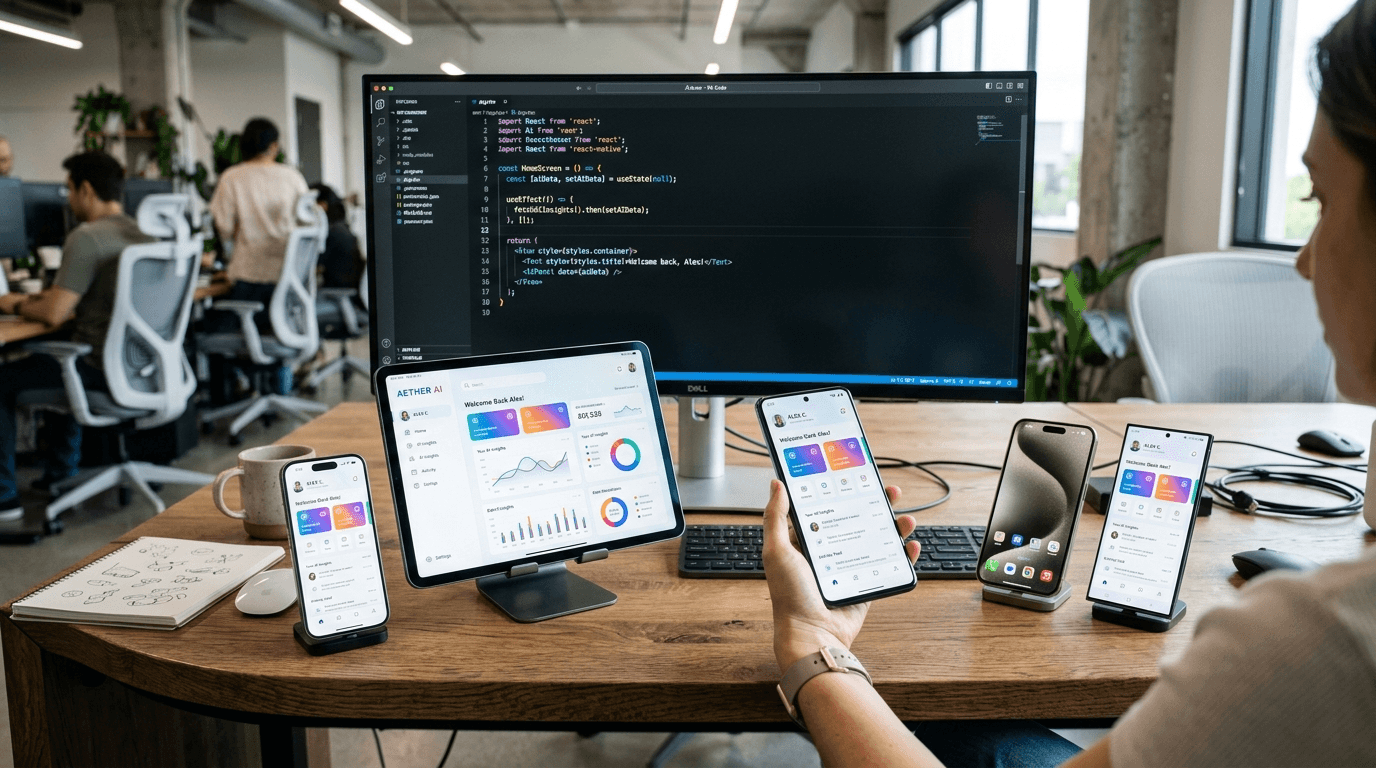

Building mobile apps with AI is no longer the domain of tech giants with hundreds of engineers. Modern tools, frameworks, and APIs have democratized access to powerful AI capabilities — making it possible for lean teams to ship AI-powered mobile experiences that compete with the best.

Why AI Is Now Essential in Mobile Apps

Mobile users have been conditioned by the best apps in the world. They expect their fitness app to know their workout history and adjust recommendations. They expect their shopping app to surface products they will actually want. They expect their communication tools to help them be more productive, not just facilitate messaging.

This is the new baseline. Apps that do not incorporate intelligent features are increasingly perceived as outdated — regardless of how well-designed they might otherwise be.

The good news: the technical barrier to adding AI to mobile apps has never been lower. Between on-device machine learning, cloud AI APIs, and frameworks like React Native and Expo, a skilled team can go from concept to App Store with AI features in weeks, not months.

The React Native + Expo Foundation

For most teams building mobile apps with AI features, React Native with Expo is the optimal starting point. Here is why:

- Cross-platform by default — one codebase for iOS and Android, with optional web support

- Massive ecosystem — thousands of packages, many with native modules for hardware access

- Expo SDK — managed workflow handles complex native configurations, OTA updates, and EAS Build/Submit

- JavaScript/TypeScript — the same language your web team uses, lowering the barrier to mobile development

- Strong AI library support — React Native integrates cleanly with TensorFlow.js, ONNX Runtime Mobile, and all major cloud AI SDKs

The Expo ecosystem in particular has matured significantly. EAS (Expo Application Services) handles the entire build and submission pipeline, making it practical to ship to both App Stores with minimal DevOps overhead.

On-Device AI vs. Cloud AI: Choosing the Right Approach

One of the most important architectural decisions when building AI-powered mobile apps is where the AI computation runs.

On-Device AI

Running AI models directly on the user's device offers significant advantages:

- No latency from network round-trips — responses feel instant

- Privacy-preserving — sensitive data (photos, voice, location) never leaves the device

- Offline capability — AI features work without an internet connection

- No API costs — computation runs on the user's hardware

The trade-off is model size and capability. On-device models must be compact enough to run efficiently on mobile hardware — typically under 100MB for a production app. Quantized versions of models like Phi-3 Mini, Gemma 2B, and MobileNet fit this constraint while delivering impressive capability.

Best for: Computer vision, wake word detection, local NLP tasks, offline recommendations, privacy-sensitive features.

Cloud AI

Calling cloud-based models via API offers the inverse trade-off:

- Full model capability — access to GPT-4, Claude, Gemini, and other frontier models

- No model management — the provider handles updates and infrastructure

- Complex reasoning — tasks requiring deep understanding or generation benefit from larger models

- Real-time knowledge — cloud models can be connected to live data

Best for: Complex natural language understanding, content generation, multi-modal tasks, features requiring up-to-date world knowledge.

Most production apps combine both approaches — using on-device models for fast, privacy-sensitive interactions and cloud AI for complex, generative tasks.

Core AI Features That Users Love

Personalized Recommendations

Recommendation engines are one of the highest-ROI AI features in mobile apps. Whether recommending products, content, workouts, or restaurants, they keep users engaged by surfacing what is most relevant to them.

Implementation options range from simple collaborative filtering (users who liked X also liked Y) to sophisticated neural recommendation models that incorporate behavioral sequences, context, and explicit preferences.

Even a basic recommendation system, properly implemented, can increase session length by 20-40% and significantly improve retention.

Natural Language Processing

NLP enables apps to understand and respond to text in human terms — not just pattern-matched keywords. Use cases include:

- Smart search that understands intent and synonyms

- Sentiment analysis for reviews or feedback classification

- Summarization of long-form content

- Intelligent categorization of user-generated content

- Conversational interfaces for hands-free or voice-driven workflows

Libraries like Transformers.js and ONNX Runtime Mobile make it possible to run capable NLP models on-device, while cloud APIs (OpenAI, Anthropic, Cohere) handle more complex tasks.

Computer Vision

Modern mobile hardware is remarkably capable for vision tasks. Common applications include:

- Object recognition — identify products, plants, food, or landmarks from camera input

- OCR (text recognition) — extract text from photos, receipts, business cards, or documents

- Face and pose detection — for fitness apps, AR experiences, or identity verification

- Quality assessment — evaluate photo quality, detect blur, or assess product condition

Apple's Core ML and Google's ML Kit provide on-device vision capabilities with strong platform integration. TensorFlow Lite supports custom models for more specialized tasks.

Voice and Speech

Voice AI in mobile apps goes beyond simple voice-to-text:

- Voice commands — navigate the app, trigger actions, or compose content hands-free

- Real-time transcription — live captioning, meeting notes, or dictation

- Voice authentication — identify users by voice characteristics

- Audio analysis — detect emotions, categorize sounds, or analyze musical content

With the maturation of on-device STT (like OpenAI Whisper's mobile variants) and high-quality TTS, voice features are increasingly achievable without cloud dependencies.

The AI-Powered Development Process

Building apps with AI requires a slightly different development process than traditional mobile development.

1. Define AI Feature Hypotheses

Before writing code, clearly define what user problem each AI feature solves and what success looks like. "Add AI recommendations" is not a feature — "Increase repeat session rate by 15% through personalized content surfacing" is.

2. Data Strategy First

AI models are only as good as their training data. Early in development, instrument your app to collect the behavioral signals your AI features will need. This data strategy is often the difference between AI features that work and those that disappoint.

3. Start with APIs, Graduate to Custom Models

For most features, starting with a cloud API or pre-trained model is faster than training from scratch. Validate that the feature delivers value before investing in custom model development.

4. Build the AI Infrastructure Layer

Abstractions that manage model loading, caching, fallback behavior, and A/B testing of different model versions are essential for maintaining AI-powered apps in production.

5. Evaluate and Iterate Continuously

AI features require ongoing evaluation. Monitor accuracy, user engagement with AI-powered surfaces, and user feedback. Build pipelines for continuous improvement.

App Store Considerations

Apple and Google both have policies relevant to AI-powered apps:

- Transparency requirements — clearly disclose AI-generated content to users where relevant

- Privacy labels — accurately represent what data your AI features collect and how it is used

- Content moderation — generative AI features require robust content filters to prevent policy violations

- Review sensitivity — apps that generate text, images, or voice may receive additional scrutiny during review

These are manageable considerations, not blockers — but they are easiest to address when incorporated into the design from the start.

Getting Your AI Mobile App to Market

The full path from concept to App Store typically looks like this:

- Discovery & Architecture (1-2 weeks) — define AI features, data strategy, and technical architecture

- Foundation (2-4 weeks) — core app structure, navigation, authentication, AI infrastructure layer

- AI Feature Development (4-8 weeks) — implement, evaluate, and iterate on AI features

- Integration & Polish (2-3 weeks) — connect all components, optimize performance, handle edge cases

- Testing & Submission (1-2 weeks) — QA, App Store assets, review submission

A focused team using React Native and Expo can realistically ship a capable AI-powered app in 10-16 weeks. The efficiency gains from cross-platform development and the Expo ecosystem make a meaningful difference.

At AIgentic.media, we have guided multiple mobile app projects from initial concept through App Store launch. We bring both the technical AI expertise and the mobile development experience to build apps that users actually love.

Conclusion

Mobile apps with AI are not just technically feasible for any team — they are commercially essential for any app that wants to compete for user attention. The frameworks exist. The APIs are accessible. The tooling is mature.

What differentiates the apps that win is thoughtful application of AI to genuine user problems — combined with the craft to implement those features beautifully. That is the challenge and the opportunity for every team building mobile software today.